What is prompt optimization?

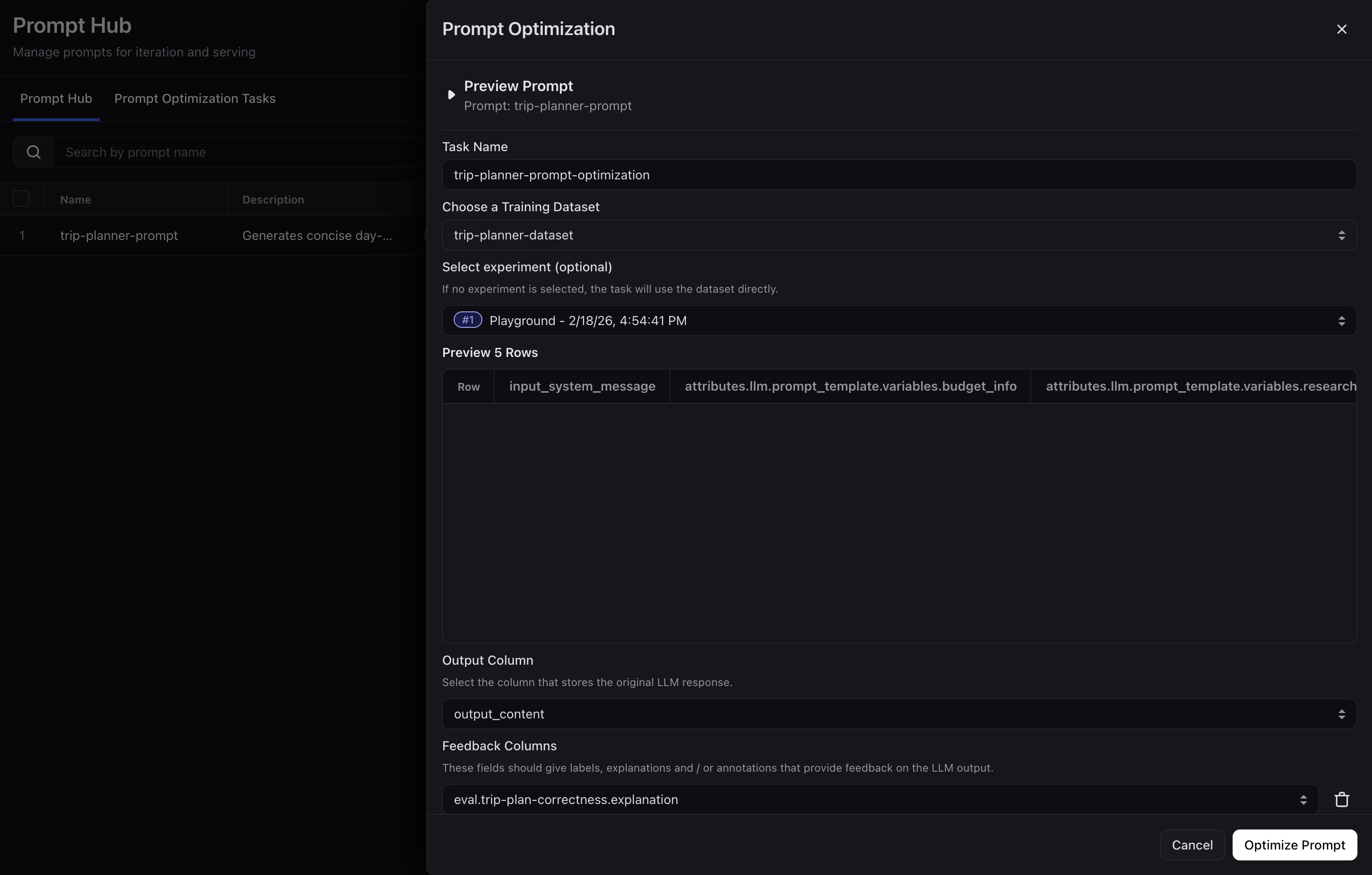

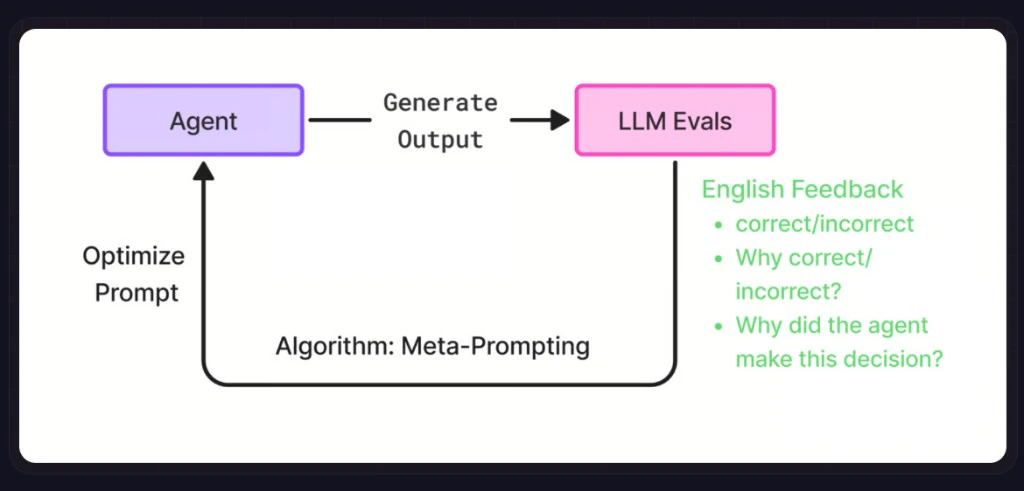

Prompt optimization (in Arize, primarily Prompt Learning) improves your Prompt Object using meta-prompting: an LLM reads your current prompt, evaluation feedback, and examples, then proposes a revised prompt that better matches your criteria. Instead of manual trial and error, the system runs a feedback loop - generate outputs, score them, feed the signals back, repeat - the same engine in the UI, SDK, Alyx, or Skills.The prompt learning process

Why optimize a prompt?

Modern models are capable, but how you guide them drives reasoning, consistency, and accuracy. Most teams cannot hand-tune prompts at lab scale - which is why data-driven prompt optimization matters: you refine prompts from evaluations, natural-language feedback, and traces instead of guesswork, with the same engine in the UI or code. In practice, this approach has improved coding accuracy on SWE-Bench by 10-15% and reasoning scores on Big Bench Hard by up to 10%. What you get with Arize Prompt Learning:Strong results

Proven improvements across key benchmarks including SWE-Bench for Claude Code and HotPotQA.

No-code or SDK

Optimize through the UI or directly via the SDK. Same optimization engine, flexible workflows.

Version control

All optimized prompts are automatically versioned in Prompt Hub for easy rollback, comparison, and tracking.

Experimentation

Run side-by-side experiments on optimized versions in the Playground to identify the highest-performing configuration.

Workflow

- By Skills

- By Alyx

- By UI

- By Code

Use the Arize skills plugin with the

arize-prompt-optimization skill to optimize prompts from traces and experiments via the ax CLI. Try asking your agent:- “Optimize this classifier prompt using the last week of eval feedback.”

- “Revise my support prompt to reduce verbosity while keeping escalation rules.”