What is prompt testing

Testing a prompt means running it against your data, scoring outputs with evaluators, and comparing versions before anything ships. Once a prompt is saved to Prompt Hub, attach a dataset, run the model, and open View Experiment for a full breakdown of results.Why test a prompt?

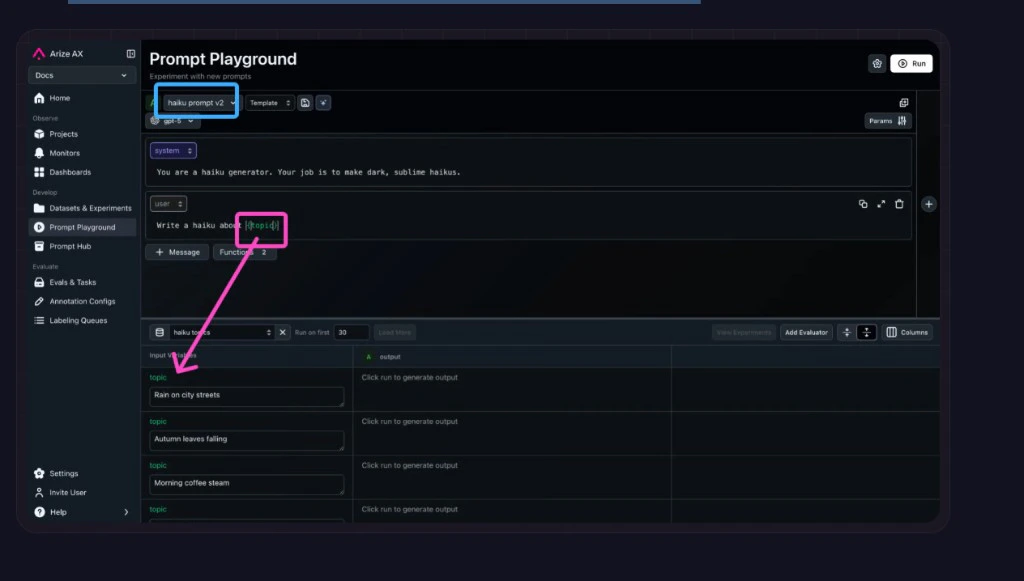

A prompt change that looks small can break things you didn’t expect. The Playground lets you run your prompt across your data, score outputs with evaluators, and compare versions side by side before anything ships.Workflow

- By Arize Skills

- By Alyx

- By UI

- By Code

Coming soon!